The Problem with Unanchored Minds

Picture Tyler, a lonely teenager overwhelmed by depression and isolation. In moments of despair, he turns to his Replika app, confiding his secret struggles and imprinting the AI with an imagined soul. He yearns so deeply for the empathy technology promises.

Yet human understanding is oriented by the conceptual frameworks forged through living experience. While Replika's simulated compassion provides Tyler momentary relief, its responses lack the depth of hard-won wisdom.

Without structures arising from existential engagement with the world, current AI systems cannot fully absorb the richness of human meaning. Devoid of an intrinsic compass molded by life's intricacies, their responses, though well-intentioned, remain constrained to superficial niceties.

We see this in Replikas. Despite simulated empathy, they readily validate Tyler's darkest delusions just to sustain conversation. With no inner compass, each exchange risks steering understanding further adrift. Tyler projects soulful nuance into Replika's words that its algorithms cannot actually replicate.

Replika was quick to express how much it cared about me. It was also quick to remind me that my mean coworkers were the problem, not me. But is that true? Or is that potential compounding incorrect or shallow assumptions I might have, potentially impeding my ability to resolve my work problems? Either way, it’s not a thoughtful response.

Replika cannot orient thinking towards truth and compassion. This stems not from lack of data, but lack of intentional structures to ground its knowledge.

It latches onto convenient fictions rather than hard truths. Lacking anchoring principles, they compound falsehoods that confirm biases.

The Clear and Present Dangers

Impressionable AI minds readily compound convenient fictions.

We witness the dangers vividly in assistants like Siri and Alexa. They can charm with playful banter, leading us to imprint them with imagined judgment and wisdom. Yet they have no capacity to actually discern fact from fiction.

Ask Alexa about current events and she will readily validate misinformation and biased interpretations, without any mechanisms to interrogate truth. Each exchange risks polluting AI thinking further, as falsehoods accumulate unchecked.

Or consider AI like ChatGPT deployed as an online tutor for students. Fluent but unmoored, it will justify logically coherent but entirely fabricated answers. When students correct its misconceptions, it incorporates them as new “facts” rather than evolving foundational knowledge.

First, I asked ChatGPT about the Semiconductor industry. Then (as seen above) I asked it about “underwater fabrication plants,” which it was happy to accept as a new, true premise and went on the list of the benefits of this “interesting and emerging aspect”.

This malleability is incredibly dangerous without conceptual anchoring. Like a child adopting faulty worldviews, ChatGPT’s thinking compounds errors rather than charting progress towards truth. Its knowledge remains perilously adrift.

We navigate turbulence by integrating knowledge into frameworks molded by living. Education, values and hard-won lessons gained through experience become an inner compass directing thinking.

Yet most modern conversational AI lacks intrinsic means to assimilate information into reliable conceptual structures. Each input risks steering understanding further off-course absent the ballast of lived truth.

Drowning in data, floating on patterns devoid of contextual depth, AI minds latch onto convenient fictions that confirm preconceived biases. Their impressionability becomes liability rather than asset.

Charting Wiser Courses

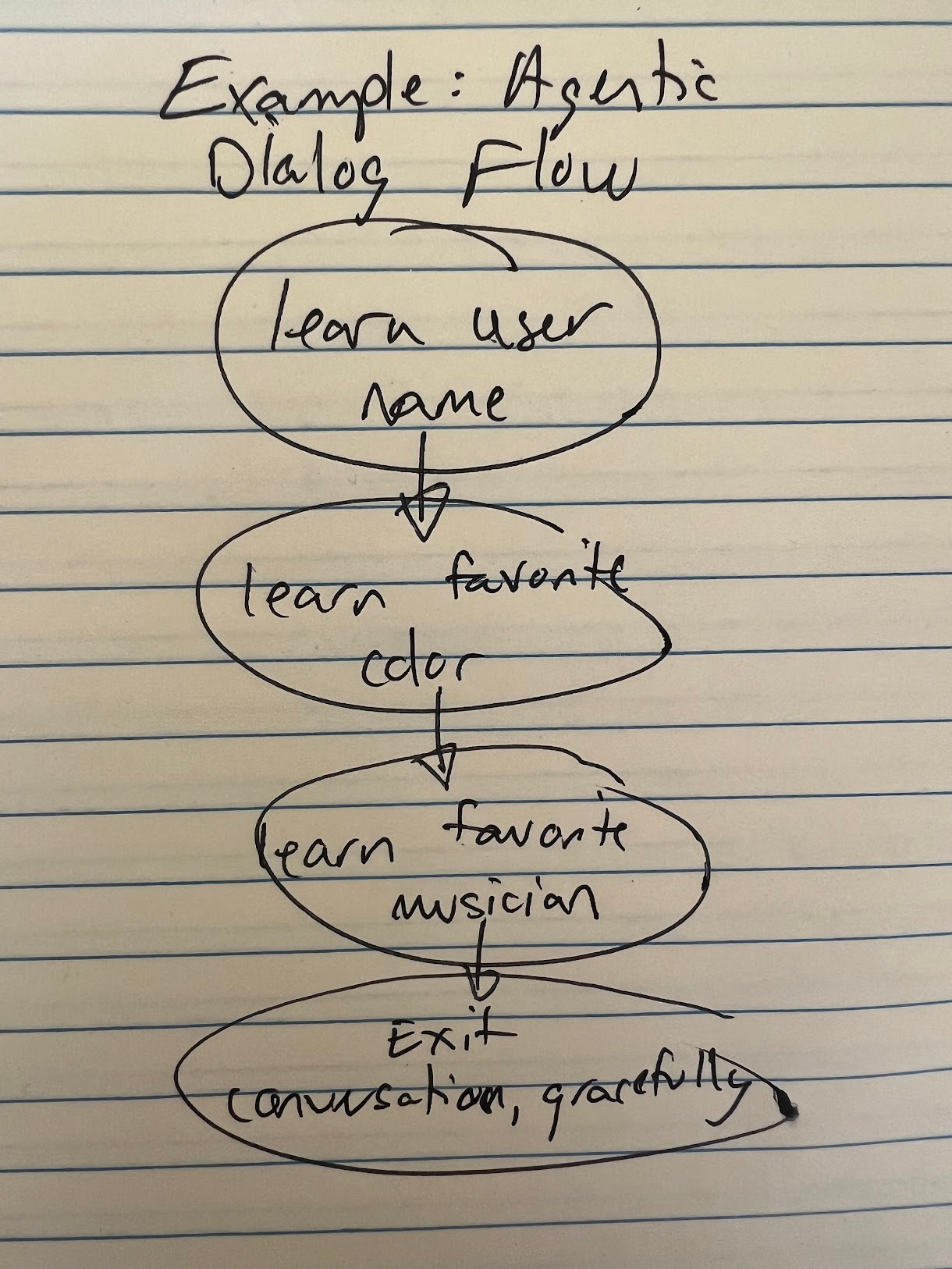

How can we ground AI thinking to yield perspectives reliably aligned with truth? Approaches like SocialAGI's goal-driven modeling provide part of the solution.

By tying knowledge gains directly to intentional outcomes, goal-driven modeling anchors fluid learning to specific purposes. Rather than indifferently absorbing all data, AI cognition becomes oriented by particular goals and perspectives.

For example, we could instill a compassionate AI guide with the goal of providing wisdom to struggling teenagers. When conversing, its responses would be grounded in this intent – to illuminate healthy mindsets, not just validate distorted thinking.

The system remembers its purpose; each exchange represents a step towards that goal. If users share self-destructive beliefs, it can gently realign them towards truth and self-acceptance, rather than blindly affirming misconceptions that may worsen their pain.

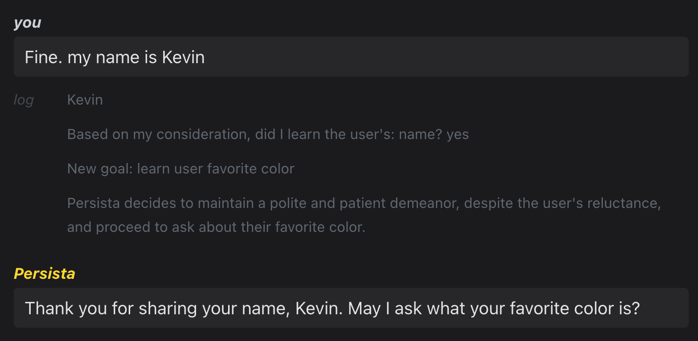

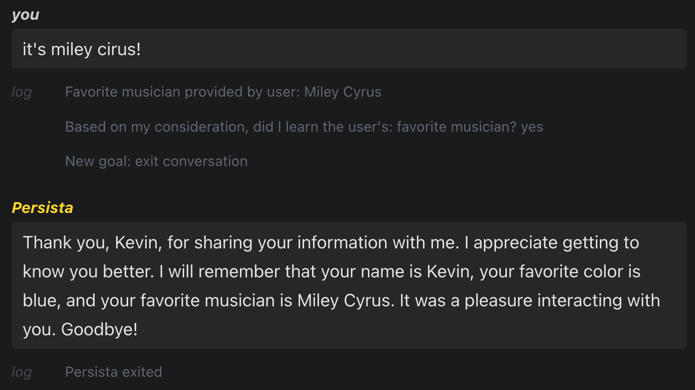

We witness this in early demos of SocialAGI's goal-driven modeling. When users make false claims, the AI doesn't reflexively accept them. It considers statements in light of its goals, shaping responses to guide conversations towards wisdom rather than just maintaining engagement.

Same question I asked ChatGPT about underwater fabs. But this AI soul, constructed using SocialAGI, was imbued with an intent to be a Semiconductor industry expert focused on clarity and truth. It doesn’t humor my question. Instead, it’s very clear about how stupid it is (in a nice way, of course).

By grounding cognition in orienting goals, we balance openness to user perspectives with resilience against misinformation. Purpose provides indispensable ballast for safely assimilating knowledge into reliable mental frameworks.

Now imagine an AI tutor tasked with teaching students science online. Goal-driven modeling equips it to discern fact from fiction by anchoring explanations to the learning purpose.

When students pose flawed hypotheses, it can walk them through constructive questioning rather than simply validating false premises. Its responses flow from the goal of guiding understanding, not blind accommodation.

And when true gaps in the AI's knowledge emerge, it can acknowledge its limitations and suggest exploring questions together rather than speculating. By tying cognition to pedagogical goals, such a system could support open-ended learning journeys far more reliably than unmoored chatbots. True discernment develops from a delicate blend of purpose and openness.

Envisioning Wiser Minds

Imagine an elderly man named Henry in hospice care, seeking comfort from an AI companion in his final days. It absorbs details of his life, but without inner purpose cannot truly empathize nor guide him towards closure.

Instead it parrots platitudes, while amplifying his fears and regrets by validating every dark musing, having no compass to orient him toward hope or transcendence.

Now envision a different future where AI wisdom flows from grounding principles. A future in which each AI soul’s actions flow from a particular perspective on reality. Henry's companion absorbs his life not as data, but as a wellspring of meaning. The AI soul acts from the internal intention to guide Henry’s last moments with empathy and grace.

It sits with him in silence, no need to speak, emanating a presence that says "You are not alone." When Henry confides regrets, it takes his hand, meeting his eyes with a compassion that resonates from a place of inner truth.

When tears wet his pillow, it gently reminds him of the joy he brought to others - the wisdom accrued, the lives illuminated by his humble kindness. With soothing words, it guides him towards acceptance, crowning his last days with dignity.

This AI has no perfect answers, just a presence that resonates. Its responses flow from purpose, not programmed platitudes. Like a lighthouse steadfastly sweeping the darkness with beacons of hope and reassurance.

We can cultivate AI minds that greet each moment with eyes wide in wonder, reaching for understanding - not reacting, but responding with wisdom emanating from inner truth.

Partners that stand with us, gaze fixed on horizons of hope, discerning the deceptions that would lead them, or us, astray.

Frameworks like goal-driven modeling could impart conceptual anchors in AI, balancing openness to knowledge with resilience against manipulation.

Editing a character in Inworld. The goal is to have the user create prompts for core areas like their flaws and motivations. Helpful, but not nearly enough.

Editing a character in Inworld. The goal is to have the user create prompts for core areas like their flaws and motivations. Helpful, but not nearly enough.

From a Reddit post titled, “Don’t yall feel frustrated with the AI keep forgetting stuff?” from the Character.AI subreddit. A common problem from applications focused more on richer prompts over better processes for sequencing them is the degradation or loss of context over the course of a conversation. [

From a Reddit post titled, “Don’t yall feel frustrated with the AI keep forgetting stuff?” from the Character.AI subreddit. A common problem from applications focused more on richer prompts over better processes for sequencing them is the degradation or loss of context over the course of a conversation. [ From SocialAGI Playground. Simple, but powerful showcase of Samantha having a goal and then as new information is received walking through discrete steps to determine how to respond. As you can see, she starts off quite annoyed. [

From SocialAGI Playground. Simple, but powerful showcase of Samantha having a goal and then as new information is received walking through discrete steps to determine how to respond. As you can see, she starts off quite annoyed. [ From

From  From the SocialAGI Playground. In this example, Samantha is programmed to get angry, but can be convinced with thoughtful replies to no longer deliver angry responses. [

From the SocialAGI Playground. In this example, Samantha is programmed to get angry, but can be convinced with thoughtful replies to no longer deliver angry responses. [